Cullen Irwin, Wake Forest University School of Law JD ’26

The Oracle Engine

It was August and too hot for a heavy conversation. Momma turned left down Briar Hollow Street saying, “you ought to visit that college in Dodson. Make something of yourself.”

“Frankie went there last year, he hates it,” I told her for the fourth time.

“Frankie’s a good boy, but he never worked the hardest. Remember that game out in Holly Springs? Didn’t he show up on pot or something?”

“Momma.” I rubbed my temples. “I’m not like Frankie, no. I’m not interested in a community college or trade school or anything. You know I want to write.”

“They have classes for that sort of thing there, Stephen,” she said, knuckles pale on the steering wheel.

“I don’t know any renowned authors from Dodson Community College, Momma. Let’s just get to this clinic already,” I winced, pressing my hand to my stomach. “Feels like someone’s twisting a wrench in there, and I do not feel like talking college again.”

She pursed her lips in acceptance, the kind that means “we’ll revisit this later.” After what felt like a road trip through hell, we arrived at Surrie Regional. It looked more like an elementary school than a hospital. It was wide, blocky, and too proud of its new brick walls, the kind that shine orange in the sunlight but seem too chalky to be real.

Inside, the air smelled of lemon cleaner and cold coffee—a smell that makes you think it’s hiding something.

The woman at the desk smiled without using her eyes. “Insurance?”

Momma fumbled with her purse. “Just the state plan, ma’am. His stomach’s been hurting him for about a week now.”

The woman nodded and handed Momma a clipboard. “Go ahead and sign and fill that form out, listing all of your symptoms and health information. Once you return that to me, I’ll run it through our fancy new system. We just got it this week.”

The “fancy new system” was a flat-screen monitor device sitting on the reception counter. It somehow faced the receptionist but was also angled like a bright and shiny trophy for the patients to see. Its screen emitted a faint blue light across the dull waiting room.

I leaned on the counter and squinted at it. “What is that thing?”

“Oh,” the receptionist said proudly, “that’s our new AI diagnostics partner—DiagnoSmart. It reads your intake forms, vitals, and symptoms, then recommends what kind of provider you need to see and even helps the doctor make a diagnosis.”[1]

Momma smiled, impressed. “Isn’t that something?”

“Ha. Those city offices can’t keep all the new goodies for themselves.” The receptionist joked. “It helps cut down on diagnosis mistakes.”

“Cool,” I muttered. “Cuts down on people, too.”

Momma didn’t laugh. She never did when she thought I was being a smartass. I was.

We sat under buzzing fluorescent lights. As we filled out the clipboard, I stared across the room at the TV, which hummed the morning news on low volume. I tried to focus on the crawl of headlines rather than the ache pulsing through my stomach while I answered the questions Momma read out to me.

Shortly after Momma delivered the clipboard to the desk, the nurse called my name.

“Room three, hon. Dr. Green will be with you soon.”

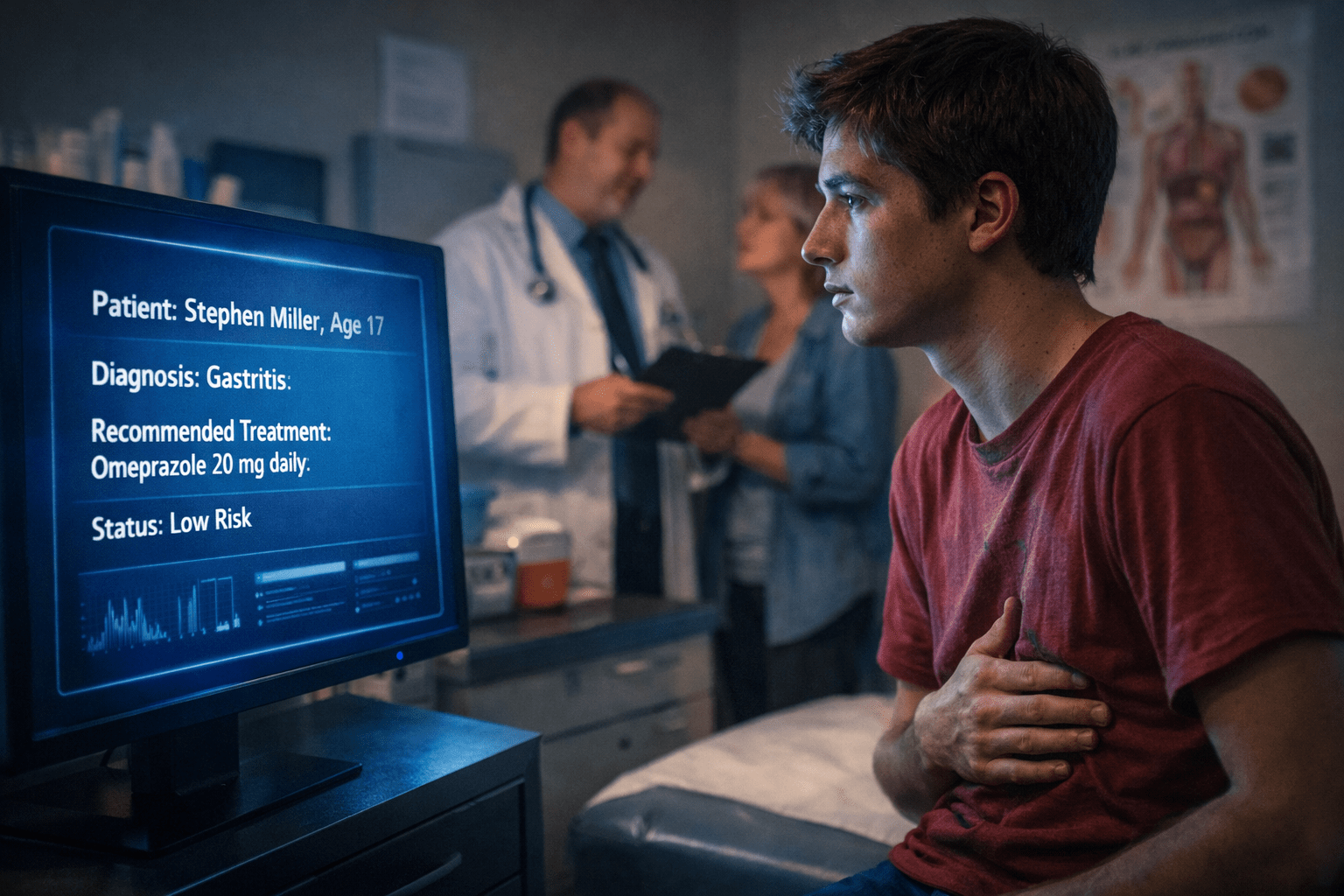

The exam room smelled like the waiting room, minus the coffee, and it looked like a closet pretending to be a space station. One of those monitors was in here blinking blue. The screen read:

Patient: Stephen Miller; Age 17; Symptom cluster suggests minor gastritis. Recommended treatment: 20 mg omeprazole daily. Follow-up discretionary, not required.

Dr. Green walked in minutes later. “Hey folks.” He glanced at the screen. “Looks like the system’s given us some direction,” he said, smiling as though the machine’s verdict relieved him of a burden. “I am Dr. Green. It is nice to meet you.”

“Hi, thanks for seeing us, Dr. Green,” Momma replied.

“My stomach’s been killing me,” I said. “Sometimes I wake up at night and it’s burning.”

“Gastritis can feel that way. It is an irritation of the stomach lining. Let me go ahead and double- check your symptoms. You’ve been feeling heartburn, stomach pain, and bloating?”

“Yes,” I said.

“You’re young and otherwise healthy?”

“I guess. Feeling pretty tired recently.”

He tapped on the blue screen. “Ah, that’s normal with something like this. I am feeling tired myself.” He laughed.

After waiting a moment, Dr. Green raised his voice like the end of a pep talk and said, “Yep. Let’s try that omeprazole and see how it works for you. Your symptoms indicate gastritis, and this DiagnoSmart machine is even more accurate than I am.”

Momma nodded eagerly. “He’ll do that, Doctor. Thank you.”

I looked between them—him trusting the screen, her trusting him—and wondered who was trusting me.[2]

As we left, I glanced back at the blue-lit monitor screen. Dr. Green had updated the chart.

Diagnosis confirmed: low risk.

Outside, the sun had dropped behind the parking lot oaks. Momma turned to me and smiled in that soft, tired way she always did when she wanted to believe something. “See? Just indigestion, honey. You’ll be fine.”

“Yeah,” I said, though my stomach felt uneasy. “I’m good.”

* * *

By the time two months had passed, my pills had become another household ritual. Momma continued to set the orange bottle next to the old coffeemaker like it belonged there. Even though I’d visited Dr. Green twice now, I didn’t feel any better. “Low risk,” Momma chirped, parroting a doctor parroting a robot.

Sometimes the pills felt like they worked, and when they didn’t, Momma would look genuinely surprised every time. I started wondering if the pain was in my head because it sure seemed like everyone else thought it was. Or maybe they weren’t thinking at all. Maybe they were just trusting the machine the way you’re supposed to. Still, I learned to keep my food down by pressing a hand against my stomach like I could hold the food in place, and I’d say, “I’m fine,” before Momma could even ask. But “fine” had a shelf life.

One Thursday, during third period, my hand-holding trick didn’t work, and my lunch, the half that I could get down in the first place, came back up onto the linoleum floor next to my desk. I am still not sure what felt worse, the classroom’s symphony of “Ewwws” or Momma telling me, “We’re going back,” while we stood in the school parking lot.

At Surrie Regional, we were greeted by the same orange brick and lemon-coffee veil as the last two visits. The only difference being a new banner flying high outside the hospital’s walls, NOW POWERED BY AI-DIAGNOSTICS.

After checking in with the receptionist, a nurse quickly called my name and directed me to room three, same as last time. DiagnoSmart proudly sat on the desk, blue screen flickering. Dr. Green shuffled in after what felt like a lifetime, looking more disheveled and bearing darker half-moons under his eyes than the last time I’d seen him. He didn’t look at me. Instead, like a moth in summer, he buzzed straight to the blue light.[3]

“Feeling the same as last time,” he paused, glancing to the top left of the screen, “Stephen?”

“Yes,” I responded. “I feel a little worse actually.”

Momma jumped in, exhaling as if she had been holding her breath underwater for minutes, “he threw up at school this afternoon. He’s been losing some weight, too.”

Dr. Green spun around in his chair, finally looking at me. He gave a reassuring half-smile, looking me in the eyes for the first time. “That’s pretty typical in chronic gastritis cases. In fact, DiagnoSmart is still flagging you as low risk,” he explained. “It’s reading your symptom pattern as consistent with chronic gastritis, possibly anxiety-related.”

“So, he’s okay?” Momma asked.

“Well, low risk doesn’t mean no risk,” Dr. Green said gently. “It means the probability of something malignant based on the data is very small. These tools are excellent at pattern recognition. They’re trained on an enormous number of cases.”[4]

He paused like he was stepping into a speech he’d given a hundred times. “I’m required to tell you the accuracy of these systems,” Dr. Green said. “Across large studies, their diagnostic accuracy is roughly 95% in cases like yours, which, frankly, is much better than any single provider’s judgment alone.[5] There’s still a small margin of error because nothing’s perfect, but that’s why we follow up when symptoms persist.”

“So what now?” I asked.

Dr. Green tapped the screen once more. “Given that you’ve been on omeprazole for two months with only partial improvement, if any, the next reasonable thing to do is an endoscopy.[6] It’s where we use a small device, weave it down the throat, and look around in the stomach. It lets us see your stomach lining, check for ulcers, inflammation, and anything more serious. We can take small biopsies if we need to.”

Momma blinked. “Is that dangerous?”

“No ma’am. He will be under moderate sedation which my nurse would discuss in detail with you. We do these all the time.” He glanced at the screen. “And this one is covered under your plan. You wouldn’t be paying out of pocket.”

Momma exhaled in relief. “Then yes. Let’s do it.”

I felt my stomach twist. I had spent the last two months trying to ignore what my body was telling me, what it was trying to get me to tell others. Now, finally, someone listened and was going to take a look.

“Alright,” Dr. Green said. “We will do it tomorrow morning. See you then,” he said, standing to exit.

As the nurse walked in with forms, I sat there wondering whether “we” included me or the machine or both. The consent packet seemed as thick as my math textbook, and Momma read every line. I watched the blue monitor as the nurse explained the sedation, recovery, and “rare risks.”

The next morning, I was face up on a narrow bed in a prep room that smelled like rubbing alcohol. The nurse put a bracelet on my wrist and asked me what music I liked to calm my nerves.

“Anything but country,” I answered, as Dr. Green walked in to check on us.

Momma chuckled softly, and Dr. Green smiled under his mask. When they rolled me into the procedure room, I was enveloped by a dim blue light. Another DiagnoSmart screen floated next to my bed, sitting contently and ready to dissect my every cell.

“Just count backwards from one hundred for me,” the anesthetist said. I made it to ninety-seven.

* * *

When I woke, my throat felt scraped raw, like I’d swallowed sand. Momma was sitting by my bed trying to discern a pamphlet with small text.

“Hey,” she said quickly, brushing hair off my forehead. “You’re okay,” she continued, reassuring herself.

Dr. Green entered a moment later with a folder under his arm. He sat down saying, “Okay, Stephen, all done. I’ll tell you what we saw.”

I nodded, throat too dry to speak.

“The lining of your stomach showed mild inflammation. No ulcers. No masses. There was minor bleeding in a few spots, though, but that is not infrequent.[7] We also took biopsies in a few spots just to be thorough, but visually there’s nothing to be very concerned over.”

Momma’s shoulders dropped so hard I thought she might fold. “Thank you, Lord,” she whispered.

Dr. Green nodded respectfully then continued, “DiagnoSmart’s reading of the imaging we took confirms this diagnosis. It seems to be chronic gastritis as previously discussed. This kind of thing can be stubborn, and stress can make it worse.”[8]

“But how am I in so much pain?” I asked. “With only mild inflammation?”

“Well gastritis certainly is not pleasant. And like I said, stress can make this kind of thing worse. Have you been under increased stress lately?” he asked.[9]

“I mean, college admissions has been on my mind, and this whole thing is stressful. Not eating is stressful. Thinking I am going to throw up at school is stressful.”

“Sure,” Dr. Green said gently. “Of course it is. I think the best medicine for you is rest. If you need to miss class because of stress caused by your stomach, I can give you a note. Just reach out,” he said, looking to Momma. “Also, I am switching you to esomeprazole.[10] It is a bit stronger than omeprazole and hopefully will nip this thing in the bud.”

I tried to believe it. I really did. The doctor had looked inside me. The machine agreed. The logic was sound. But still, when I glanced past Dr. Green at the dimmed blue monitor, it felt like it was watching me the way a sports announcer watches a game, calling plays based on patterns and never wondering how it feels to be hit.

Momma squeezed my wrist. “See baby? You’re fine.”

I nodded and tried to trust everything but the ache twisting in my stomach.

* * *

I sat in bed, journal resting on my bent right leg, while the heat of the laptop bled through the sheets next to my left thigh. I continued to write my tale of a boy who lived in a fantastic ancient city that worshipped an advanced industrial technology:

In the city of Lyrien, no one lined up outside of old healers’ doors any longer. Instead, they climbed the hill to the Oracle Engine, which sat at its peak, a glass heart in a cage of brass ribs, fed by thin streams of river water. When you stood on the stone circle before it, the Engine opened its many eyes of crystal and breathed in everything it could measure: your pulse, the tremor in your hands, the heat rolling off your skin, and the bend in your spine. Then, after it whistled steam for three ticks of time, it closed its eyes, and its heart would deliver its verdict. A pale red warned of dire illness, a faint purple said to visit the herbal ministry clinics, and a light blue signaled little danger . . . .[11]

Half way through my writing, I stopped, setting down my journal, and I picked up my laptop to search a question into Google—“Does AI misdiagnose patients?”

Ten minutes and three articles later, Momma walked in the door to my left. From the doorframe she could see my screen. Damn, I thought, as large bolded letters read “Study Finds AI Medical Tools Show Bias, Potential for Misdiagnosis and Patient Harm.”[12]

“Stephen!” She snapped. “Sorry,” Momma paused, more gently now. “You’ll worry yourself sick looking at those things. Dr. Green said everything is okay, honey. He looked in your stomach. It’s only inflammation, hon. I promise.”

“I know,” I responded, shutting the laptop. “I was only wondering.” Wondering why I still felt this pain, months later. But I didn’t say that out loud.

“Good night, honey. Get some rest,” Momma said. “You need it.”

She shut the door.

* * *

The day I collapsed, the sky was one of those blue skies you so often read about in stories but see too infrequently to remember they’re real. A beautiful November day, shadowed by small, wispy clouds and a quiet dread that you don’t recognize until it’s too late. We were in English class, last period, and Mrs. McCollum had us doing senior presentations. “A piece of yourself,” she called it. She wanted us to share with the class something we had done or created during our time at Surrie High.

I chose to tell the short story of the boy Corin that I had written. Not because I thought it was good, but because it was the only way I could tell the truth of my pain without telling anyone about me.

When Mrs. McCollum called my name, my legs felt unsure. I stood anyway. Everyone watched in their lazy teenage way as I stood in between them and their weekend. I read the first paragraph fine, voice shaky. I blamed it on nerves. By the bottom of the first page, the room started tilting. I went on, stabilizing myself on the side table to my right. When the pain hit, though, the ink began dripping across the page, words bleeding together. All I remember was someone yelling for the nurse and my friend Will saying my name like he didn’t recognize it. The rest is flashes.

I saw the nurse’s office, breathed in oxygen through a tube under my nose, smelled Momma’s perfume as she put a hand on my head, and felt the pull of a car coming to a rolling stop.

In the ER everything was a blur, but when I woke, I saw a doctor with tired eyes and a steady voice say my name, “Stephen?”

“I am Dr. Patel,” he said. “Do you know where you are?”

“Surrie Regional?” I asked.

“No, you’re at Ogdon Health Hospital in Dodson.”

“Surrie doesn’t have what they need to care for you, honey,” Mother said.

“Stephen, if you are okay, and if you are ready, I’ll go ahead and tell you what we found,” Dr. Patel said.

I nodded.

He took a breath. “There’s a mass in your stomach. It’s large, and it appears aggressive.” He paused, then continued. “The imaging suggests it has already invaded beyond the stomach wall. We are consulting oncology now.”

“A mass?” Momma asked, her eyes confused.

“Yes. Cancer.”

I croaked a chuckle. It wasn’t funny, it was perfect.

Momma’s voice cracked. “But the endoscopy. Dr. Green said—”

“Endoscopies can miss lesions depending on their location, among other things,”[13] Dr. Patel said carefully. “And some gastric cancers can progress quickly.[14] Still, given how long this went on, I would’ve wanted CT imaging even with a normal endoscopy. Honestly, an endoscopy was probably called for on your second visit. If I understand his records, Stephen got the endoscopy in October? Over two months after the first visit?”

Momma paused, trying to take in everything she was hearing. “Uh, yes, doctor.”

“I am sorry.” He paused. “And I am not here to second-guess another physician. But I will say this. When a diagnostic-support system keeps repeating “low risk,” it can push people into a tunnel. Your son’s course called for stepping back and asking what else could explain it, AI or not. That didn’t happen soon enough.”

Dr. Patel pulled up a scan on the screen by my bed side. It showed my insides at inhuman shades.

“Here is the mass,” he said.

“Is it . . . is it operable?” Momma forced out, scared of the answer.

Dr. Patel’s face didn’t change. “At this stage, surgery is unlikely to remove the mass completely. We can talk about treatment options like chemotherapy and radiation, but I am very sorry to say that the cancer looks to be . . .” He paused, looking down and breaking composure. “Terminal,” he finished and met Momma’s eyes.

When Dr. Patel left, neither of us spoke for a while. Finally, Momma pressed her hand to my knuckles. “We did everything right,” she said. “Everything they told us.”

“I know,” I responded in tired acceptance.

“Then how . . .” she trailed off.

I had an answer but didn’t say it.

* * *

Eliza’s desk creaked under the weight of stacked case files, two coffee mugs, a picture of her and her father at the Surrie rodeo fair, an old dust-laden oral advocacy award, and, maybe most of all, the stress in her shoulders as her elbows leaned on the old mahogany. It had already been a long day, so when she got the call from her receptionist that the mother who was looking for help with a medical malpractice case had arrived, Eliza took a deep breath and glanced out her office window at the taillights of her Jeep Grand Cherokee. She exhaled a sigh and slowly worked her eyes over to the coffee machine in the left corner of the room. Enough water for another cup, she thought. Eliza shook her head, so much for the “cut down on caffeine” New Years resolution.

“Send her in in five, Betsy,” Eliza told the receptionist.

The door clicked behind the mother with a soft finality. She was younger than Eliza had expected. Mid-thirties maybe. She stepped over to the chair before Eliza’s desk.

“Mrs. Hart?” Eliza asked, standing and offering her hand.

“Um, yes,” she responded quietly, taking Eliza’s hand in a tired grasp. “Call me Mara,” she said as she sat stiffly.

“Okay, Mara, I read the general information you sent to my office yesterday and understand what happened involved an AI diagnostic system run by Surrie Regional Hospital, correct?”

“That’s right,” she said.

“I’m sorry,” Eliza said simply. “Tell me what happened in more detail, and please start wherever makes sense for you.”

Mara nodded and looked at her hands. She began a story that sounded orderly and rehearsed like she had practiced it in the mirror, discussing Stephen’s pain, his three visits to Dr. Green, the endoscopy, and his incidents at home. At the end, she explained the collapse, Ogdon Health, Dr. Patel, and the word terminal uttered like a verdict. When she got to that part, her voice shook, its neatness gone.

“They kept telling me ‘low risk’ like it was a lullaby,” she said. “I trusted it because they did. Because I am supposed to. I don’t . . . I don’t know when it became normal for a machine to say my son was fine when he wasn’t.” She swallowed and closed her eyes. “And I don’t know what to do with that now. I just—” She cut off, shaking her head. “I need to know if there’s anything we can do.”

Eliza held Mara’s eyes in hers. “Okay,” Eliza said. “A few questions, and then I’ll tell you what I can and cannot promise.”

Mara nodded.

“Did Dr. Green ever tell you the AI system could be wrong?”

“He said it was accurate,” Mara replied. “And that it reduced mistakes and had like ninety percent effectiveness or something,” she said, hurriedly. “He made it sound like it was better than people. You know, better than doctors.”

“Did he say you could opt out of AI diagnostics?” Eliza asked.

“No, he didn’t say that. It was just . . . there,” Mara said. “And he would only ever give us his diagnosis after he talked with that device.”

“Okay,” Eliza said, nodding. “And he made you sign a form regarding the AI usage?”

“Yes, I think so. I had to sign all sorts of things,” Mara said, exasperated. “Is that important for, like, a lawsuit?”

“It could be yes. Most likely,” Eliza explained. “We’ll have to get a hold of that form, but it is probably their proof of informed consent. Basically, a doctor must reasonably inform you of the risks and alternatives associated with a procedure, test, or, in this case, the use of an AI assistant, and the doctor must obtain your consent after providing that information.”[15]

“So . . . is that it?” Mara asked.

“No, we have options,” Eliza continued, “but it is an uphill battle.”

“When is it not an uphill battle?” Mara said softly.

Eliza nodded curtly. “The best option would be to sue Dr. Green for medical malpractice and argue that he breached the applicable standard of care. I must tell you though, medical malpractice suits are notoriously hard to win.[16] I am not saying this to discourage you. I’m saying it so you know what kind of fight you’d be walking into.”

Mara’s jaw tightened. “Why is it so hard?”

“Because in court we need to prove a few specific things,” Eliza said, sliding a legal pad toward herself. She scratched a line. “First, we’d have to prove that Dr. Green breached the applicable standard of care. That means proving a reasonably competent doctor, faced with Stephen’s symptoms, would have done something different sooner.”[17]

“He should have,” Mara said quickly. “He should have looked deeper before October. He should have done that scan like Dr. Patel said.”

“Maybe,” Eliza said gently. “But ‘maybe’ isn’t enough in malpractice. We’d need an expert, another qualified doctor, to come in and say as much. Someone with credentials in gastroenterology or oncology. And usually, we’d need more than one expert.”

“How much do they cost?” Mara asked, apprehensively.

“They’re too expensive, honestly.” Eliza said plainly. “Generally, it costs thousands for them to review the file and often tens of thousands to testify. Their hourly rates are around $500 on average.”[18]

Mara’s gaze fell on her hands again.

When she looked up, Eliza continued, “Next, we have to prove that the delay in diagnosis is what led to Stephen’s death.[19] The defense would argue that the cancer was aggressive, that even earlier chemo might not have saved Stephen. To win in court, a jury has to be convinced that earlier treatment more likely than not would have changed the outcome.[20] Another expert question.”

Mara’s voice was low. “But it would have given him a chance.”

Eliza pursed her lips. “That’s the human truth. But our state doesn’t allow for what’s called ‘loss of chance.’ We would have to prove that Stephen would have lived had this diagnosis been made earlier, not just that he’d have had a better chance at surviving.”[21]

“That’s not fair,” Mara swallowed.

“I know. I’m sorry. The law can feel that way sometimes.”

Mara breathed out, “And what about the AI company? Or the hospital?”

Eliza leaned back slightly. This was the knot in the rope. “The AI problem is novel. It’s a new issue that courts haven’t faced yet,”[22] she said, watching Mara’s face to make sure she was following.

“Right now, there are big regulatory gaps regarding diagnostic AI usage by doctors and hospitals, and your case would depend on several factors. For instance, companies like DiagnoSmart usually shield themselves by saying, “We don’t practice medicine. The doctor makes the final decision and our product simply augments, or supports, the doctor.”[23]

“But the doctor trusted it,” Mara said, heat behind her words. “I trusted it . . . I trusted it because he did. I . . . I told Stephen to trust it,”[24] she said, her words breaking, and the veil fully dropping to allow Eliza to see her pain. The pain of a mother who lost her boy too young, not knowing whether she could have done anything differently. Anything at all.

Eliza slid the tissue box across the desk, not saying a word. Mara took two. Minutes passed, and the sound of fresh rain pattering against the windowsill harmonized with the quiet tears of a broken mother.

“Stephen told me, you know,” she said between breaths.

Eliza’s lips parted with a question, but she thought better of it and closed her mouth, waiting.

“He was worried that the AI machine was wrong. He felt it and researched it. And I . . . I—”

“You trusted your doctor,” Eliza cut in, tenderly. “You tried to help your son.”

Mara nodded her head, repeatedly, quiet. “So is there nothing we can do?”

Eliza waited a moment. “We can try. There are theories—product liability, failure to warn—that could apply. But DiagnoSmart likely has a contract with Surrie Regional that limits their liability.”[25]

Mara looked at Eliza with a tired gaze. “So they can just write their way out?”

“They can try,” Eliza said. “Courts don’t always let them succeed. But it usually means more litigation and more money.”

Mara wrung her hands as if to wash them clean. “What about the hospital?”

“We can look at a claim against Surrie Regional too,” Eliza said. “But hospitals aren’t automatically on the hook for every doctor. Unless Dr. Green was their employee acting within the scope of his job, proving that the hospital is liable for this can be hard. If he was what we call an independent contractor, then the hospital will disclaim all liability. There are ways around that, but again . . . uphill.”[26]

“Everything is uphill,” Mara said, defeated.

“Yes,” Eliza said quietly. “That’s what I want you to understand before deciding anything.”

For a moment the room was still except for the hum of the air vent and the rain’s greeting rhythm against the window’s glass.

“I don’t even know if I can do this,” she said. “I’m drowning in bills, and now if I sue, I have to hear people argue about my son like he was a chart. I don’t want to relive him dying in a courtroom,” Mara said, closing her eyes.

Eliza felt that in her chest. It’s why she didn’t say you should sue right off the bat. You never knew what a person could survive twice.

“I understand completely,” Eliza responded.

“So what do we do?” Mara asked, red eyes opening.

“You can file suit and accept the risk. You can go through a large malpractice firm that can front expert costs and carry a longer fight. Another option is complaining to regulatory boards and hospital administration. Those will not get you damages the way a lawsuit might, but they can push change.”[27]

Mara looked uncertain. “Do those work?”

“They can,” Eliza said. “Especially with something new like AI. Hospitals are sensitive to scrutiny. If the tool caused harm, they would want to know.”

“Would you take my case,” Mara finally asked.

Eliza didn’t shy from the inevitable question. “I need to think carefully. On contingency, I would be paying expert costs up front with a low likelihood of success. I don’t want to promise what I can’t deliver.”

Mara nodded like she’d expected that answer but still needed to hear it.

“But if you want to pursue this,” Eliza added, “I won’t leave you without options, even if I don’t take your case. I can refer you to a malpractice group that handles high-cost cases. I can work with you on whichever route you choose to take. You don’t have to decide today.”

When the meeting finally wound to its end, Eliza walked Mara out the front door of her building. A burning sensation sat in Eliza’s stomach. It made her want to go back upstairs, open her laptop, and continue digging into this AI regulatory disaster.

As she returned to her desk, Eliza grabbed her yellow legal pad, writing, “When a machine says, ‘low risk,’ who pays for the risks it misses?” Her coffee cup stared up at her, filled to the brim and cold as the office air.

* * *

It was the morning of Stephen’s 18th birthday, March 18th, 2025. Mara Hart opened her son’s journal to reminisce. There, she found the story of a young boy named Corin who was betrayed by blue-light promises from a higher technology. After tears subsided, Mara picked up her keys from the coffee table and marched to the door.

Twenty minutes later, she walked into Surrie Regional with a hurried, determined step. She was greeted by the smell of coffee and cleaning supplies as she strode up to the receptionist’s desk.

“Good morning,” the receptionist said with a smile.

“Good morning,” Mara responded, flatly. “Can I collect medical records here?”

“You can access those on your patient portal from your computer or mobile device,” she said, still cheery.

“I’m not good with that sort of thing,” Mara replied. “Can y’all print them here?”

“Well, sure. I guess we can!” The receptionist assured Mara.

The woman handed Mara a touchpad that bled blue light. Large letters marked the top of the screen, “DiagnoSmart 2.0.”

“Just fill in your information in those blanks, and I can process it here on my end. Then I’ll print it in the back for you.”

Mara filled out the information and handed the tablet back, a deep pit forming in her stomach.

“Pretty nifty, huh?” the receptionist said.

“DiagnoSmart?” Mara asked.

“Yeah, it is an AI diagnostic tool. We just received its new update!” she continued, kindheartedly. “It now has improved deep machine learning,” she said whimsically, as if telling a ghost story.

Mara blinked. “Did it learn from Stephen?”

“Who?”

APPENDIX: Ethical and Legal Issues Raised by “The Oracle Engine”

In writing “The Oracle Engine,” I set out to highlight issues posed by the inevitable proliferation of AI throughout the medical industry. First, this story is a cautionary tale about “automation bias” and “complacency.”[28] Although AI is a fantastic tool that should continue to be implemented in the medical industry, all healthcare providers need to receive significant training in order to understand the pitfalls and strengths of the AI programs they are using so they are capable of operating them safely and effectively. To combat automation bias and complacency, regulations should require careful human oversight of these systems at both the development and application levels. Second, this story begs several questions, how does AI interface with the relevant standard of care? When AI influences a healthcare provider’s diagnosis, should liability always fall on the provider, or are there instances where an AI company should be liable? And how should AI medical devices be regulated?

This appendix addresses these concerns in three parts: (I) how to combat automation bias and complacency; (II) an analysis of liability after AI’s introduction to healthcare; (III) and a brief discussion of federal regulations and a significant regulatory gap concerning medical AI.

- Automation Bias and Complacency

As AI becomes increasingly capable and more embedded across industry, the workforce will become increasingly dependent on its support for complex reasoning and decision-making. Recognizing this danger, a study published in August of 2025 titled Automation Complacency: Risks of Abdicating Medical Decision Making discussed the risk of automation bias and complacency in the healthcare industry.[29] “Automation bias is the tendency to defer to decision support systems, replacing one’s own investigative means,” methods, and experiential-based judgment.[30] “Automation complacency . . . arises when an operator or interactor of an automated system incorrectly assumes it is running smoothly and fails to account for future problems that may arise from this sort of negligence or low index of suspicion.”[31] Such complacency is “exacerbated by mechanical functions of routine jobs, like time pressure and workload”[32]—something physicians notoriously struggle with.

The study concluded that AI systems “can enhance efficiency and outcomes in healthcare” at the risk of “erod[ing] vigilance, impoverish[ing] therapeutic relationships, and potentially [creating] poorer outcomes regarding overall well-being.”[33] For example, Google Health’s AI system “has demonstrated proficiency in detecting breast cancer in mammograms with greater accuracy than human experts.”[34] Nevertheless, the proficiency of these programs allows physicians to over-rely on AI output.[35] For example, a “2023 randomized crossover study found that clinicians of all expertise levels were vulnerable to automation bias, even though AI improved their overall diagnostic accuracy and efficiency. While assisted by AI, nearly half of the errors were associated with the misleading effect of [automation] bias.”[36] Thus, “[r]educed vigilance by human operators may compromise patient safety, quality of care, and clinical interactions.” However, if healthcare providers actively question an AI program’s recommendations, they could reduce automation bias and complacency, further improving patient outcomes.[37]

One purpose of “The Oracle Engine” was to draw the reader’s attention to Dr. Green’s reliance on DiagnoSmart. Though Dr. Green satisfied informed consent requirements and arguably met the standard of care, failure to question the outputs of DiagnoSmart negatively affected Stephen. Dr. Green instead acted as a rubber stamp to confirm DiagnoSmart’s readings and as a microphone to relay its diagnoses to Stephen and Mara.

Already, AI diagnostic programs are used by 12% of doctors.[38] This number is destined to increase exponentially over the next decade. Diagnostic accuracy and efficiency are also projected to increase with the development and implementation of advanced AI systems. Nevertheless, the practical experience of physicians is a necessary filter and translator for AI diagnostic outputs. A physician must be able to analyze the input prompts given to an AI system in conjunction with the AI’s output, and he or she must discern whether the system is missing contextual cues that the physician understands.

Furthermore, a physician must be able to effectively communicate an AI’s diagnosis, confirm it themselves via traditional methods, and inform the patient of the biases inherent in AI systems as well as the reasons for why the AI’s diagnostic either aligns or misaligns with the physician’s independent diagnosis. Such safeguards encourage physicians to think critically and can reduce automation bias and complacency, extracting the diagnostic benefits from AI while mitigating the negative effects related to over-reliance. These safeguards can be implemented through state or federal regulation of AI developers and deployers.[39]

- Who is Liable for Malpractice Once AI Takes Root in Medicine?

Liability for injuries resulting from symbiotic decision-making between physician and AI systems is an unclear and novel legal issue. Normally, when a healthcare provider provides care or recommends treatment and an injury results, the courts use well-established practices to allocate liability among the provider, product manufacturer, and patient.[40] The standard of care is different for physicians and manufacturers, but the patient must still prove that both the physician and the product maker defendants owed the patient a duty, the defendants breached the standard of care, and the breach caused the patient’s injury.[41] The introduction of AI into the medical industry complicates several aspects of the liability calculus, including the standard of care analysis, design-defect arguments, and determining causation.

First, AI’s complexity obscures the applicable standard of care. The standard of care requires a doctor to meet “the standards of practice among similar health care providers situated in the same or similar communities under the same or similar circumstances at the time of the alleged act giving rise to the cause of action.”[42] The applicable standard in a given case is often a heated expert debate,[43] and the introduction of AI only increases the debate’s stakes and complexity. Now, in addition to asking which comparator community or training defines the physician’s standard of care, courts and juries must also consider standards for using the AI system at issue.[44]

The logical question in this scenario is, was the physician’s adoption of an AI’s recommendation unreasonable? In medical software cases, courts answer this standard of care question by evaluating a physician’s decision against “what other specialists would have done.”[45] However, since AI systems are such new additions to the healthcare industry, there is no standard of care established using these systems. Comparing how a specialist would have used a traditional computer program or software to how a physician used artificial intelligence is inherently flawed. More traditional computer software is pre-programmed to allow for processing of particular data to achieve a desired outcome.[46] AI learns, adapts, and predicts based on training data.[47] Therefore, AI’s output less predictable and should require a higher duty from a physician. As a result, the standard of care calculus involving newly introduced medical AI devices will likely be difficult and inconsistent until a larger swath of case law is decided or statutory law enacted.

Stephen’s story illustrates the importance of a doctor’s independent evaluation of an AI system’s output. Evidence of automation bias or complacency at trial may doom a physician’s case, and rightfully so. In states that have not enacted specific physician-oversight requirements, courts should incorporate independent evaluation of AI diagnostic outputs into the relevant standard of care when considering these questions in the inevitable flood of AI-related medical malpractice cases.

AI’s introduction also complicates product liability law due to AI’s inherent complexity. Claims of software defects are already hard to sustain when a software’s functionality and inner workings are so complex that a specific design defect is difficult to pinpoint, so complex AI models will likely face similar headwinds.[48] “The complexity and opacity of AI lead[s] to similar issues.”[49] Furthermore, it is nearly impossible to prove that there is a reasonable alternative design of an AI’s program, a requisite for finding product liability for design defects.[50] AI models are enormous mathematical algorithms trained from even larger sets of data to identify statistical patterns and create outputs accordingly.[51] Unless a plaintiff can prove that the model’s training data had specific biases, it may be difficult to prove there is a reasonable alternative design.[52] Plaintiffs may also struggle to prove that such a defect in training data caused their injury.[53]

This brings us to the ultimate hang-up for plaintiffs in these cases—causation. Causation is notoriously difficult for plaintiffs to prove in medical malpractice and product liability cases.[54] Now that a physician’s reasoning may be dependent on a super-computer’s intellect, the causation question only becomes more difficult. If Dr. Green or DiagnoSmart decided to conduct a CT scan, would it have changed Stephen’s outcome? Did Dr. Green or DiagnoSmart properly conduct and review Stephen’s endoscopy? In states that recognize the loss of chance doctrine, Stephen may be able to recover, depending on whether Dr. Green violated the standard of care, of course.[55] Nonetheless, in the majority of states, this causation analysis remains as difficult as ever, and introducing an extra layer of super-intelligence only further stretches thin a plaintiff’s resources to prove their case.

Ultimately, questions of liability in medical malpractice and product liability are objectively difficult and AI tools only further complicate the determination of the standard of care, design-defects, and causation. For these reasons, states and the federal government should be encouraged to regulate the use and development of AI-diagnostic systems to proactively reduce defects as opposed to forcing injured plaintiffs to seek institutional recourse.[56]

- Federal Regulation of AI Medical Products

Although the issue of federal regulation was not explicitly addressed in “The Oracle Engine,” Mara’s projected fight against DiagnoSmart’s developer depends on federal law and whether DiagnoSmart was a federally-registered product. The Food and Drug Administration (“FDA”) defines “medical devices” as including “machines that are intended for use in diagnosing, treating, or preventing disease.”[57] Thus, DiagnoSmart would likely fall under the FDA’s purview.[58] And it is not unreasonable to assume that the FDA is currently reviewing systems like DiagnoSmart as the Agency has already approved more than 1000 AI tools, which are primarily radiological in function, as medical devices.[59]

The current executive administration, however, is reducing rather than expanding federal oversight of AI.[60] “President Trump rescinded a 2023 executive order calling for a range of federal and private activities to strengthen AI governance in high-risk domains,” and the administration’s mass firing of many FDA staff indicates that “stronger AI oversight is not commensurate with the administration’s goal of shrinking federal agencies.”[61] Accordingly, it is unclear how the FDA will keep up with the already incredible challenge of regulating the rapid and exponential growth of AI in medicine.

“The Oracle Engine” also acts as a proper stepping stone to discuss the most prominent regulatory issues and gaps that arise for AI medical devices—the FDA does not have authority to regulate physician and nurse conduct.[62] The FDA can require warnings and instructions for a product’s use, but “its oversight will never approach the level of governance needed to ensure safe and effective deployment of AI.”[63] For example, AI medical devices may get approved by the FDA because they function perfectly when tested by the developer during clinical trials in controlled environments. But, like in Stephen’s case, what happens when you take the AI device out of its cozy clinical trial environment and place it in the hands of a rural hospital that is low on staff and whose doctors are exhausted and over-reliant on the AI?

Therefore, to combat improper usage of AI in healthcare, as discussed in Part I of the Appendix, it is up to state legislatures and other federal agencies to create proper regulatory frameworks to monitor a physician’s use of AI.[64] “The Centers for Medicare and Medicaid Services is an obvious candidate for regulating tools” that health care providers use, and the agency should use its authority to create comprehensive oversight requirements for AI medical devices.[65] Thus, the goal of “The Oracle Engine” is to shed light on the real and frighteningly simple consequences of the use of such an ethereal and incomprehensibly complex medical device, Artificial Intelligence. In embracing its support, let us not surrender our own intelligence.

[1] Tanya Albert Henry, 2 in 3 Physicians are Using Health AI – up 78% from 2023, Am. Med. Assoc. (Feb. 26,, 2025), https://www.ama-assn.org/practice-management/digital-health/2-3-physicians-are-using-health-ai-78-2023#:~:text=Physicians’%20use%20of%20health%20care,a%20recently%20released%20AMA%20survey. (discussing that, for example, 12% of doctors are using AI for assistive diagnosis, 10% of physicians have a patient-facing chatbot for health recommendations and self-care engagement, and 21% use AI for documenting billing codes, medical charts, or visit notes).

[2]Carolyn Tarrant, et al., Continuity and Trust in Primary Care: A Qualitative Study Informed by Game Theory,8 Annals Fam. Med. 440 (2010). ( A United Kingdom research team in 2010 conducted an empirical investigation of patients’ accounts of their trust in physicians. The results showed that “[p]eople use institutional trust, derived from expectations of medicine as an institution and doctors as professionals, as a starting point for their transactions with unfamiliar doctors.”)

[3] In multiple fields, including healthcare, researchers have found evidence of cognitive atrophy due to an over reliance on AI. See Liz Mineo, Is AI Dulling Our Minds?, Harv. Gazette: Science & Tech (Nov. 13, 2025), https://news.harvard.edu/gazette/story/2025/11/is-ai-dulling-our-minds/; see also; Krzysztof Budzyń, et al., Endoscopist Deskilling Risk After Exposure to Artificial Intelligence in Colonoscopy: a Multicentre, Observational Study, 10 Lancet Gastroenterol Hepatol 896 (2025), https://www.thelancet.com/journals/langas/article/PIIS2468-1253(25)00133-5/fulltext; see also; Nataliya Kosmyna, et al., Your Brain on ChatGPT: Accumulation of Cognitive Debt When Using an AI Assistant for Essay Writing Task, arXiv (Jun. 1, 2025), https://arxiv.org/abs/2506.08872 (It should be noted that papers in arXiv’s database are typically pre-publication and may not have been peer reviewed yet.).

[4] See James L. Cross, Michael A. Chroma & John A. Onofrey, Bias in Medical AI: Implications for Clinical Decision-making, PLOS Digital Health at 3 (Nov. 7, 2024), https://pmc.ncbi.nlm.nih.gov/articles/PMC11542778/. Although AI systems are remarkably accurate at pattern recognition and can be highly accurate on average, systems like DiagnoSmart are disconnected from the doctor’s room itself, making them blind to human signals that could push a doctor to reconsider an unlikely diagnosis. See also; Michelle M. Li, et al., One Patient, Many Contexts: Scaling Medical AI with Contextual Intelligence, arXiv 1, 1-2(Jun. 11, 2025), https://arxiv.org/abs/2506.10157. (Clinicians do not diagnose only by matching symptoms to data points like an AI. Instead, they use clinical context “to take into account all ambiguous information and patient preferences” in order to produce a diagnosis specific to a patient.); see also; Teus H. Kappen, et al., Decision Support in the Context of a Complex Decision Situation, arXiv 1 (Mar. 2, 2024), https://arxiv.org/abs/2003.00921.( Such context could include sensory cues that a patient may not articulate on paper or that may only be discovered through human interaction or using abstract knowledge, as discussed by Tikhomirov and colleagues.) See Lana Tikhomirov, et al., Medical Artificial Intelligence for Clinicians: the Lost Cognitive Perspective, 6 Lancet Digit. Health 589, 589 (2024), https://www.sciencedirect.com/science/article/pii/S2589750024000955. Although Tikhomirov’s study considered specific sensory cues that radiologists understand, it is fair to state that the way a patient walks, how he winces when he breaths, the fatigue in his voice, and the way his parent answers for him, are sensory cues that could lead a doctor to alter a diagnosis. Because AI systems lack this surrounding context, they must be specifically provided it. Another possible flaw in AI pattern recognition is that the datasets that an AI system is trained on affect the system’s diagnosis rates; see also;Ted A. James, Confronting the Mirror: Reflecting on Our Biases Through AI in Health Care, Harv. Med. Sch. (Sep. 24, 2024), https://learn.hms.harvard.edu/insights/all-insights/confronting-mirror-reflecting-our-biases-through-ai-health-care. (“An AI model cannot question its dataset in the same way clinicians are encouraged to question the validity of what they have been taught.” Tikhomirov, supra note 4, at 590. If the AI was only trained on individuals from one ethnicity, it may have lower diagnostic accuracy rates when diagnosing an individual of a different ethnicity. Cross, supra note 4, at 4.)

[5] Adam Hadhazy, Can AI Improve Medical Diagnostic Accuracy?, Stan. U. HAI (Oct. 28, 2024), https://hai.stanford.edu/news/can-ai-improve-medical-diagnostic-accuracy (In a Stanford study, ChatGPT on its owned posted a median diagnostic score of 92%, while physicians, both AI-assisted and old fashioned, posted median diagnostic accuracy scores of 76% and 74%, respectively. )

[6] Gastritis, Cleveland Clinic, https://my.clevelandclinic.org/health/diseases/10349-gastritis (last visited Nov. 29, 2025)https://my.clevelandclinic.org/health/diseases/10349-gastritis (A healthcare provider will “not know for sure if you have [gastritis] without testing for it. . . .The best way to confirm gastritis is by taking a tissue sample during an endoscopy, but doctors will “usually recognize gastritis visually even before the biopsy confirms it.”

[7] Nimish Vakil, Gastritis, Merk Manual (Jan. 2025), https://www.merckmanuals.com/home/digestive-disorders/gastritis-and-peptic-ulcer-disease/gastritis (explaining that bleeding can result from gastritis and can remain undetected if the bleeding is “mild and slow”).

[8] Budzyń, supra note 3, at 901 (Researchers discovered that endoscopists who had been introduced to an AI-assisted system during colonoscopies and subsequently had the system removed experienced a decline in their detection rates from 28.4% to 22.4%.).

[9] Rishi Megha, Umer Farooq & Peter P. Lopez, Stress-Induced Gastritis, National Library of Medicine (Apr. 16, 2023), https://www.ncbi.nlm.nih.gov/books/NBK499926/ (explaining that stress responses can lead to a decrease in gastric renewal and decreased blood flow to the stomach, resulting in atrophy of the gastric mucosa and making the stomach more prone to ulceration and hyperacid secretion); see also; Gastritis, Fla. Digestive Health Specialists, https://www.fdhs.com/patient-information/gi-conditions/gastritis/ (last visited Nov. 29, 2025) (illustrating various causes of gastritis such as H. Pylori, prolonged use of NSAIDs, excessive alcohol consumption, autoimmune diseases, and severe stress).

[10] Daphne Berryhill, Esomeprazole (Nexium) vs. Omeprazole (Prilosec): 7 Things to Know When Comparing These PPIs, GoodRx (Mar. 25, 2025), https://www.goodrx.com/classes/proton-pump-inhibitors/esomeprazole-vs-omeprazole-for-acid-reflux (“An analysis of four studies found that esomeprazole lowered stomach acid levels more than four other PPIs, including omeprazole, in people with GERD.”).

[11] . . . Scribes wrote down the result, priests blessed it, and families went home holding onto a blue light like an omen. The old healers still kept their shutters open on the lower streets, but most days they sat with clean hands, watching the hill and saying that the Oracle brought progress.

One evening, a boy named Corin climbed to the Engine with his arm wrapped tight around his middle. Something inside him had been stubbornly aching for so long that his gait bore a hunch. His mother walked beside him, whispering all the reasons that it would be nothing.

“The Oracle will know,” she repeated, as if the words could dull the pain.

When Corin stepped into the circle, the Oracle’s brass ribs shivered, and its crystal eyes flared. After a moment, filled only by the whistling of hot steam exhaled by the Engine’s breath, the faintest blue light peered from the machine’s heart.

“Safe,” the scribe said as he jotted a note down with his quill.

Corin forced a smile at his mother as they descended the hill. But as they walked down past the quiet healer’s shops, he kept his hand pressed into the indented holster it had formed against his side, wondering why the lingering pain in his gut didn’t retreat with the blue light that faded behind him . . . .

[12] Mahmud Omar, et al., Editor’s Pick: Study Finds AI Medical Tools Show Bias, Potential for Misdiagnosis and Patient Harm, UCSF (Apr. 7, 2025), https://codex.ucsf.edu/news/editors-pick-study-finds-ai-medical-tools-show-bias-potential-misdiagnosis-and-patient-harm.

[13] Masau Sekigushi & Ichiro Oda, High Miss Rate for Gastric Superficial Cancers At Endoscopy: What Is Necessary for Gastric Cancer Screening and Surveillance Using Endoscopy?, 5 Endosc. Int. Open. 727, 727 (2017) (a “study concluded that 11.3% of upper GI cancers were missed at endoscopy performed within 3 years before the diagnosis, and showed that 0.25% of all the endoscopic procedures missed existing upper GI cancers.”).

[14] How Fast Do Gastrointestinal (GI) Cancers Progress?, Digestive & Liver Disease Consultants, P.A. (Oct. 16, 2025), https://www.gimed.net/blog/how-fast-do-gastrointestinal-gi-cancers-progress/.

[15] See Canterbury v. Spence, 464 F.2d 772, 782 (D.C. Cir. 1972) (A doctor has “a duty to warn of the dangers lurking in the proposed treatment,” and “a duty to impart information which the patient has every right to expect,” such as “reasonable disclosure of the choices with respect to proposed therapy and the dangers inherently and potentially involved.”).

[16] Burton Craige, The Medical Malpractice “Crisis”: Myth and Reality, 9 NC St. B. J. 8, 9 (2004) (“Studies have repeatedly confirmed what lawyers know from experience: malpractice plaintiffs in North Carolina win at trial about 20% of the time.”); Philip G. Peters, Twenty Years of Evidence on the Outcomes of Malpractice Claims, 467 Clin. Orthop. Relat. Rsch. 352, 352 (2008) (“Physicians win 80% to 90% of the jury trials with weak evidence of medical negligence, approximately 70% of the borderline cases, and even 50% of the trials in cases with strong evidence of medical negligence.”).

[17] Some states like North Carolina have codified the standard of care. N.C. Gen. Stat. § 90-21.12. (The standard of care requires a doctor to meet “the standards of practice among similar health care providers situated in the same or similar communities under the same or similar circumstances at the time of the alleged act giving rise to the cause of action.”). Other states leave the standard of care to the common law. See, e.g.,Nestorowich v. Ricotta, 767 N.E.2d 125 (N.Y. 2002) (stating that “a doctor [must] exercise ‘that reasonable degree of learning and skill that is ordinarily possessed by physicians and surgeons in the locality where [the doctor] practices’”) (quoting Pike v. Hosinger, 49 N.E. 760 (N.Y. 1898)).

[18] See Expert Witness 101, Physician Side Gigs, https://www.physiciansidegigs.com/expert-witness-101 (last visited Nov. 26, 2025) (listing average rates for expert witness work in medical malpractice suits and explaining to other physicians how “lucrative and fun” being an expert witness can be).

[19] See Stukas v. Streiter, 83 A.D.3d 18, 23 (Sup. Ct. N.Y. 2011) (“In order to establish the liability of a physician for medical malpractice, a plaintiff must prove that the physician deviated or departed from accepted community standards of practice, and that such departure was a proximate cause of the plaintiff’s injuries.”).

[20] See Fitzgerald v. Manning, 679 F.2d 341, 348 (4th Cir. 1982) (concluding that the plaintiff must show that “more likely than not” the physician’s negligence “was a substantial factor in bringing about the result”).

[21] The “loss of chance” doctrine allows “a plaintiff, or her survivors, to recover for a doctor’s negligence that reduces the chance of recovery or survival, regardless of whether the patient’s chance of survival was above or below 50% at the time of the doctor’s alleged negligence.” Charles A. Jones, Prashant K. Khetan & Whitney Lindahl, The “Loss of Chance” Doctrine in Medical Malpractice Cases, Troutman Pepper Locke: Articles + Publ’ns (March 13, 2013), https://www.troutman.com/insights/the-loss-of-chance-doctrine-in-medical-malpractice-cases/. A number of states recognize the loss of chance doctrine, while eight states have clearly rejected its application. See id.

[22] Austin Littrell, The New Malpractice Frontier: Who’s Liable When AI Gets It Wrong, 102 Med. Econ. J. 5, 5 (2025) (“So far US courts have not seen a malpractice case where AI is at the center of the claim.”).

[23] Michelle M. Mello & I. Glenn Cohen, Regulation of Health and Health Care Artificial Intelligence, 333 JAMA 1769, 1770 (2025).

[24] See Mineo, supra note 3.

[25] See Mello & Cohen, supra note 23, at 1770 (AI developers may “limit liability and warranties, for instance, eschewing any representation that the outputs of their tool are accurate or capping how much they will pay in a lawsuit.”).

[26] See Valerio v. Liberty Behav. Mgmt. Corp., 188 A.D.3d 948, 949–50 (Sup. Ct. N.Y. 2020) (explaining the requirements necessary to hold a hospital vicariously liable for the actions of an employee physician or an independent-contractor physician).

[27] See Tristan McIntosh, et al., What Can State Medical Boards Do to Effectively Address Serious Ethical Violation?, 51 J. L. Med. Ethics 941, 941 (2023) (discussing the disciplinary actions state medical boards can use to protect the public); Matthew Bauer, Malpractice Lawsuits vs Medical Board Complaints, SVMIC (Jan. 2025), https://www.svmic.com/articles/444/malpractice-lawsuits-vs-medical-board-complaints (explaining the differences between malpractice suits and medical board complaints); How License Complaints Differ from Malpractice Claims, CM&F Group (November 29, 2024), https://www.cmfgroup.com/blog/healthcare-professionals/how-license-complaints-differ-from-malpractice-claims/ (same).

[28] Michael I. Saadeh, et al., Automation Complacency: Risks of Abdicating Medical Decision Making, 5 AI and Ethics 5783, 5783 (2025).

[29] Id.

[30] Id. at 5785.

[31] Id. (internal quotation marks and citation omitted).

[32] Id.

[33] Id. at 5783.

[34] Selçuk Korkmaz, Artificial Intelligence in Healthcare: A Revolutionary Ally or an Ethical Dilemma?, 41 Balkan Med. J. 87, 87 (2024).

[35] Saadeh, et al., supra note 28, at 5785.

[36] Id. at 5786.

[37] Id. at 5785.

[38] See id.

[39] In 2025 alone, states introduced over 20 bills concerning the use of AI in clinical care, regulating provider oversight, transparency requirements, and creating safeguards against bias and misuse of health information. Jared Augenstein, et al., Manatt Health: Health AI Policy Tracker, Manatt (Dec . 16 , 2025), https://www.manatt.com/insights/newsletters/health-highlights/manatt-health-health-ai-policy-tracker. For instance, the Texas legislature signed into law House Bill 149, which requires providers to be transparent in their use of AI for diagnosis and treatment. Tex. H.B. 149, 89th Leg., R.S. (2025). Furthermore, Texas’s Senate Bill 1188, which became effective on September 1, 2025, requires providers to review all AI-generated records to ensure the data is accurate and properly managed. Tex. S.B. 1188, 89th Leg., R.S. (2025). Providers must also review AI-generated diagnoses and retain final responsibility for any clinical decisions. Id.

[40] Michelle M. Mello & Neel Guha, Understanding Liability Risk from Using Health Care Artificial Intelligence Tools, 390 NEJM 271, 271 (2024).

[41] Id.

[42] N.C. Gen. Stat. § 90-21.12.

[43] What Do You Need to Know About the Standard of Care?, Miller Wagner, https://www.miller-wagner.com/articles/standard-of-care/ (last visited Nov. 29, 2025).

[44] This also begs the question, at what point in the future will it be patently unreasonable for a doctor to not use an AI system? Today, it is hard to consider AI’s effect on the standard of care in terms of liability. But tomorrow, AI will inevitably raise the standard of care because “the standard practice among similar health care providers” will be to use these diagnostic tools.

[45] Mello & Guha, supra note 40, at 273.

[46] What’s the Difference Between AI and Regular Computing?, The Royal Institution (Dec. 12, 2023), https://www.rigb.org/explore-science/explore/blog/whats-difference-between-ai-and-regular-computing.

[47] Id.

[48] Id. at 274.

[49] Id.

[50] Id. at 271.

[51] Cross, supra note 4, at 3.

[52] Id. at 272.

[53] Id.

[54] Which Element of Medical Malpractice is Hardest to Prove?, Marks & Harrison (May 1, 2025), https://www.marksandharrison.com/blog/which-element-of-medical-malpractice-is-hardest-to-prove/; Common Challenges in Product Liability Cases and How Law Firms Overcome Them, Finch McCranie, LLP (May 27, 2024), https://www.finchmccranie.com/blog/common-challenges-in-product-liability-cases-and-how-law-firms-overcome-them/.

[55] See supra note 21.

[56] For example, Colorado requires that both AI developers and deployers exercise reasonable care to shield consumers from “any known or reasonably foreseeable risks of ‘algorithm discrimination.’” Pat G. Ouellette, Colorado AI Systems Regulation: What Health Care Deployers and Developers Need to Know, Mintz (Jun. 27, 2024), https://www.mintz.com/insights-center/viewpoints/2146/2024-06-27-colorado-ai-systems-regulation-what-health-care (quoting Colo. S.B. 24-205, 75th Gen. Assemb. (2024)). Colorado’s bill defines algorithm discrimination as “any condition in which the use of an AI System results in an unlawful differential treatment or impact that disfavors an individual or group of individuals on the basis of their actual or perceived age, color, disability, ethnicity, genetic information, limited proficiency in the English language, national origin, race, religion, reproductive health, sex, veteran status” or other classification protected under Colorado or federal law. Colo. S.B. 24-205, 75th Gen. Assemb. (2024). If a developer or physician discovers such a risk, they are required to disclose the problem with the Colorado Attorney General within 90 days of discovery. Id.

[57] Mello & Cohen, supra note 23, at 1769.

[58] DiagnoSmart being an FDA-registered medical device also implicates federal preemption law, which is not considered in this paper, though it is safe to say that it further complicates a potential lawsuit. Accordingly, some of Mara’s state product liability claims may be preempted by the FDA’s premarket approval process as held by the Supreme Court in Riegel v. Medtronic, Inc., 552 U.S. 312 (2008).

[59] Mello & Cohen, supra note 23, at 1769.

[60] Id.

[61] Id.

[62] FDA’s Role in Regulating Medical Devices, FDA,https://www.fda.gov/medical-devices/home-use-devices/fdas-role-regulating-medical-devices (last visited Nov. 29, 2025).

[63] Mello & Cohen, supra note 23, at 1769.

[64] See Part I; Id.

[65] Mello & Cohen, supra note 23, at 1769.